In September 2024, OpenAI released o1, a model that could do something its predecessors couldn't: think step by step before answering. It wasn't just pattern matching anymore — it was reasoning, or at least something that looked remarkably like it. Within months, every major AI lab was racing to build their own reasoning models. The stakes? Nothing less than the path to artificial general intelligence.

What Reasoning Actually Means

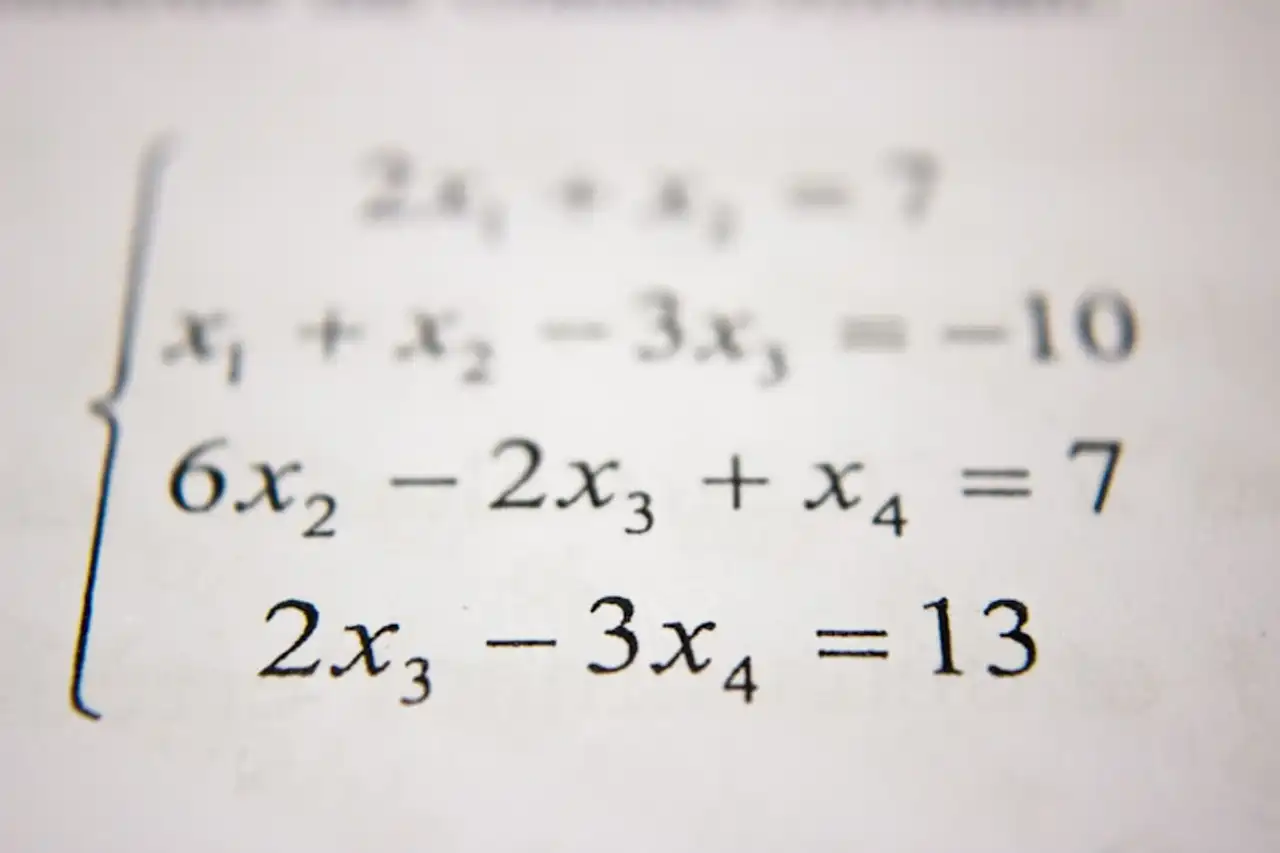

Traditional language models answer questions by predicting the most likely next word. This works amazingly well for many tasks, but it fails spectacularly when problems require multi-step logical deduction. Ask GPT-4 to solve a novel math problem, and it often stumbles. Ask it to plan a complex project with interdependencies, and it loses the thread.

Reasoning models work differently. They're trained to generate a chain of thought — breaking problems into steps, checking their own work, backtracking when they hit dead ends. The inference process is slower and more expensive, but the results on hard problems are dramatically better.

The Competing Approaches

The field has split into several camps:

OpenAI's o-series uses reinforcement learning to train models to reason during inference. The o1 model, followed by o3, showed remarkable performance on math, coding, and science benchmarks. But the approach is computationally expensive — a single query can cost 10 to 50 times more than a standard GPT-4 response.

Google DeepMind's Gemini team has integrated reasoning into their models more seamlessly, with "thinking" modes that can be toggled on and off. Their approach aims for efficiency — getting reasoning-like performance without the massive compute overhead.

Anthropic's Claude has focused on what they call "extended thinking," allowing models to work through problems in a structured way while maintaining the ability to explain their reasoning to users.

DeepSeek, the Chinese lab that shocked the industry in early 2025, demonstrated that reasoning capabilities could be achieved with dramatically less compute than Western labs assumed necessary.

The Benchmarks War

Reasoning models have obliterated benchmarks that were considered extremely difficult just a year ago. ARC-AGI, a test designed specifically to measure general reasoning ability, saw scores jump from near-random to human-competitive in 18 months. Competition math problems that stumped earlier models are now solved routinely.

But benchmarks are a double-edged sword. Critics argue that models might be overfitting to test formats rather than developing genuine reasoning ability. When presented with problems that look different from typical benchmarks — even if the underlying logic is the same — performance can drop sharply.

Why It Matters

If AI can genuinely reason, the implications cascade across every field. Scientific research could be accelerated by AI systems that formulate hypotheses and design experiments. Software engineering could be transformed by models that understand system architecture, not just code syntax. Strategic planning, legal analysis, medical diagnosis — every domain that requires structured thinking becomes an AI opportunity.

The question that haunts researchers is whether current approaches will scale to genuine reasoning, or whether we're seeing a sophisticated mimic that will hit a wall. The billions being bet say the industry believes it's the real thing. Whether they're right may be the most consequential question in technology today.