Transformers dominate AI headlines, but a competing architecture is quietly proving equally powerful for certain problem domains: Graph Neural Networks (GNNs). If you're trying to understand relationships, networks, molecular structures, or systems where entities interact in complex patterns, GNNs often outperform transformers while being more efficient and interpretable.

What Makes Graphs Special

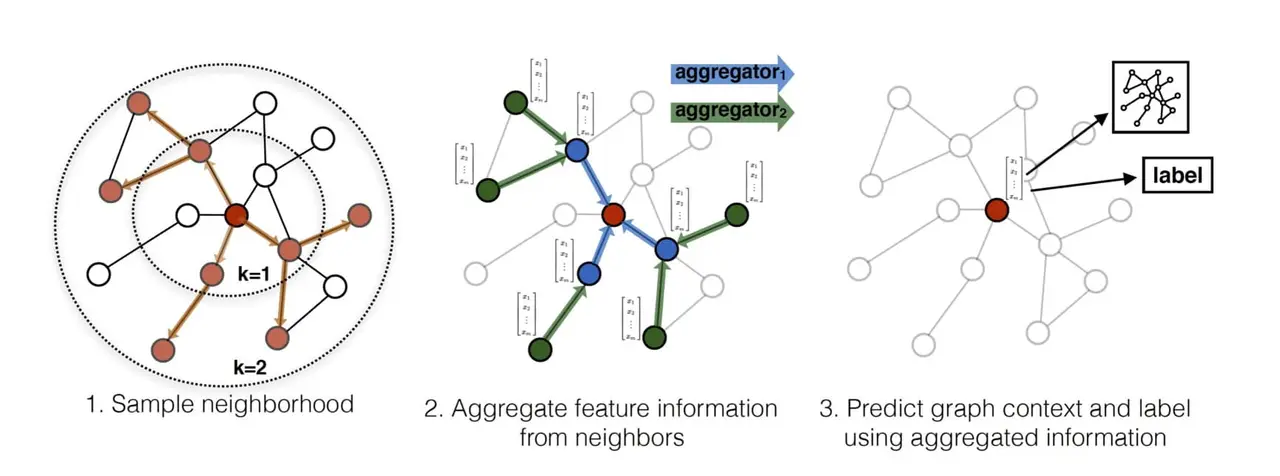

Graphs are fundamentally different from sequences or grids. A social network has nodes (people) and edges (relationships). A molecule has atoms connected by bonds. A transportation network has locations and routes. Traditional neural networks process grids (images) or sequences (text). Graph neural networks process graphs—treating relationships as first-class citizens.

Why This Matters

A GNN that understands social networks can identify influential nodes, predict link formation, and detect communities far more effectively than sequence models applied to network data. A GNN understanding molecular graphs can predict properties of molecules that haven't been synthesized yet, accelerating drug discovery. A GNN understanding road networks can optimize logistics and predict traffic patterns.

Applications Exploding

By early 2026, GNNs are deployed across domains: recommendation systems use GNNs to model user-item interaction graphs, fraud detection uses GNNs to identify suspicious patterns in transaction networks, drug discovery uses GNNs to predict molecular properties, and urban planning uses GNNs to optimize city infrastructure.

A major e-commerce company found that GNN-based recommendations outperformed transformer-based approaches by 18% on their specific task of predicting purchases based on behavioral networks. The same company reduced recommendation latency by 60% by switching from transformers to GNNs.

The Hybrid Future

Current research is removing the boundary between graph and sequence processing. Hybrid architectures that combine GNN and transformer components are emerging, enabling models that handle both graph-structured data and sequential patterns simultaneously.

For many applications, specialized graph architectures will outperform general-purpose transformers. The future of AI isn't just bigger transformers—it's the right architecture for each specific problem.